Pokémon Go style avatar to end communication barriers with deaf community

Posted 4 July, 2018

An exciting virtual sign language project under development at UCD will transform how deaf people communicate with the world.

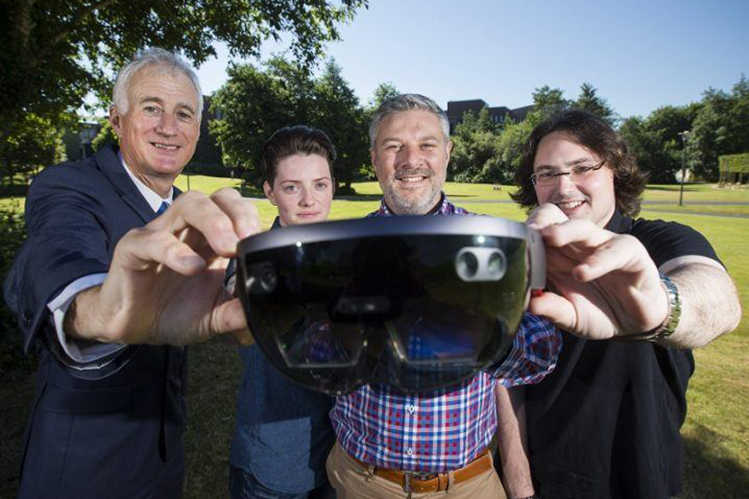

Working alongside tech giant Microsoft, researchers at University College Dublin and (opens in a new window)Lero, an SFI funded research centre, have designed a system that translates the spoken word into (opens in a new window)Irish Sign Language (ISL) and vice versa.

With the assistance of deaf students studying at University College Dublin through its Access programme, a working prototype has been developed that can interpret hand gestures used in ISL.

Using augmented reality technology similar to that found in Pokémon Go, the programme uses a digital avatar to translate ISL into computer generated speech.

When a non-deaf person wears the headset, the avatar appears on screen translating the person’s sign language into speech.

Listen to the full interview from (opens in a new window)@ElayneRuane on (opens in a new window)@RTERadio1 this morning talking about virtual Irish sign language interpreter project (opens in a new window)https://t.co/yIlKZZf1a2 (opens in a new window)@scienceirel (opens in a new window)@UCD_Research (opens in a new window)@Microsoftirl (opens in a new window)@Minoan_Boy

— Lero (@leronews) (opens in a new window)July 4, 2018

Philip Power, a deaf student studying law at UCD, said his reaction when he first used the prototype was to imagine how much it could transform his life and the lives of other deaf people.

“It will be of great benefit to students and everyday life as well. It will help create more smoother communications for everyone,” he said.

“It will be really great for deaf people because there are still a lot of barriers where they can’t access the non-deaf community.”

According to Leah Ennis McLoughlin, another deaf student studying at UCD and helping to develop the system, “It’s great to see deaf people finally being viewed as a priority.”

Dr Anthony Ventresque from the UCD School of Computer Science and Director of the UCD Complex Software Lab, who is leading the programme, hopes the team can further refine the system over the next three to five years so that it will be able to recognise facial expressions used during communication.

Pokémon Go is an augmented reality game for iOS and Android devices that allows users to capture and train avatars of their favourite Pokémon characters

The prototype developed at UCD uses a combination of Mircosoft’s (opens in a new window)Skype and the company’s augmented reality goggles (opens in a new window)HoloLens, as well as other tools such as (opens in a new window)LUIS language understanding, Azure cognitive services and Xbox depth camera technologies.

When complete, it will be capable of using a phone or headset to translate ISL into computer-generated speech, and changing speech back into ISL.

The research project is carried out in conjunction with Microsoft through its Skype4Good initiative.

By: David Kearns, Digital Journalist / Media Officer, UCD University Relations

UCD academics on The Conversation

- Opinion: The leap year is February 29, not December 32 due to a Roman calendar quirk – and fastidious medieval monks

- Opinion: Nigeria’s ban on alcohol sold in small sachets will help tackle underage drinking

- Opinion: Nostalgia in politics - Pan-European study sheds light on how (and why) parties appeal to the past in their election campaigns